I have a Lambda that is completely under my control and takes 5 seconds to complete on a cold start (< 2 seconds after warm-up). In long term, we are going to find a way in both perspective: extending the limit and support asynchronous use cases. As it increases, it raises risks in our service qualities. I hope you understand the flip side of the timeout. However, this leads us another question: How long is enough? YMMV, so it won't satisfy everyone until it becomes infinite. For some use cases, just extending the limit would be helpful. We understand the asynchronous way increases the complexity.

Otherwise, the message should contain the URL for the client to retrieve the actual result. The notification message may contain the result itself when it can be fit into the notification message restriction. Notify the client when the result is ready.It depends on what you want to do and how long it takes. The actual executor could be implemented with a Lambda function, Multiple Lambda functions with a Step Function, or ECS.The data in the storage could be clean up when the result is retrieved, by explicit DELETE request from the client, or expired by TTL on the storage side.Īlternatively you can use a notification. The client needs to implement polling mechanism with exponential retries. Usually you need to have additional api endpoints to poll the status and get the actual request, a storage to store the status and the result. There are several way to solve this problem with asynchronous way, but commonly it falls into either polling or notification (you can combine both as well).

It is not ideal for both client and server to wait the response for such a long period. In the API Gateway, that's 30 seconds which we believe it would be enough for most use cases. It could be 10 seconds, 30 seconds, or even 1 hour. Depends on people's mind, "long running task" could mean one taking longer than their expectation. We are suggesting to avoid executing a long running task with synchronous way. So, then need to move part of our API to ECS to support long running processes due to an unreasonably low 30 second timeout? If I was to start an async thread on the initial request in Lambda, then Lambda probably does not know about the other processing thread and it could kill my Lambda instance that is in the middle of processing the async request. If this is the case, from my understanding for Lambda we would have to create an entirely new long running process to handle these requests. Are you suggesting we make an api to requestt the data, it returns some polling token, the request is then processed by some long running process, stored in the database, and then the client just polls another api endpoint using some token until the backend has the data ready? During this time, we have to use additional storage mechanisms and cleanup for the data until retrieved and then I suppose hang on to longer in case client needs to retry? The hardwired 30 sec timeout really can be a deal breaker and cause some to have to move to ECS or some other solution. NGINX file may be located at /usr/local/nginx/conf, /etc/nginx, or /usr/local/etc/nginx depending on your installation.īonus Read : Increase File Upload Size in NGINX 2.Could you give a little more hint on what you are suggesting. Open terminal and run the following command to open NGINX configuration file in a text editor. Here are the steps to increase request timeout in NGINX.

Increase nginx gateway timeout how to#

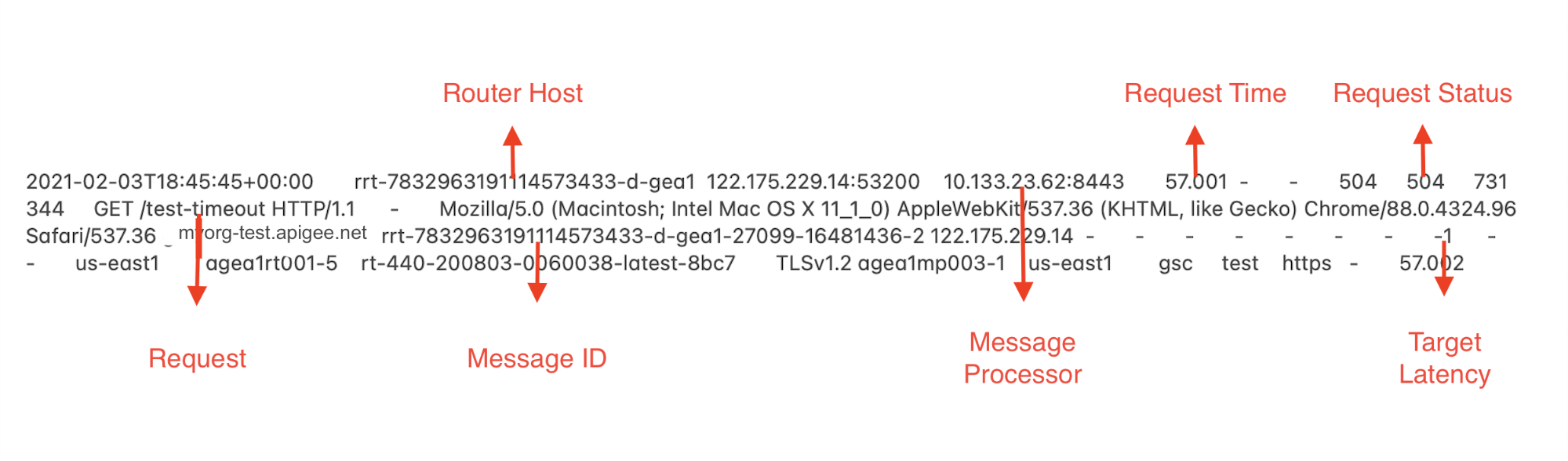

Here’s how to increase request timeout in NGINX using proxy_read_timeout, proxy_connect_timeout, proxy_send_timeout directives to fix 504 Gateway Timeout error. If you don’t increase request timeout value NGINX will give “504: Gateway Timeout” Error. Sometimes you may need to increase request timeout in NGINX to serve long-running requests. By default, NGINX request timeout is 60 seconds.